AI Liquid Cooling at System Scale

A recent presentation at Data Center World highlights how system simulation is becoming a critical capability for designing, procuring, and operating AI data centers.

AI has been embedded in digital infrastructure for years, quietly powering services like recommendation engines, security systems, and automation. What changed in late 2022 was accessibility. With the rise of generative AI tools like ChatGPT, advanced reasoning and multi‑step problem solving became available at scale. That shift fundamentally altered data center workloads.

What began as retrieval‑based processing has evolved into long chains of inference executed by AI agents that plan, reason, and adapt. As NVIDIA CEO Jensen Huang noted in his GTC 2026 keynote, “The inference inflection has arrived.”

For data center leaders, this inflection point shows most clearly in one place: cooling, and increasingly liquid cooling.

At last week’s Data Center World in Washington, DC, the Spark Session: Physics-based System Simulation for AI Data Center Cooling, highlighted how system simulation is becoming a critical capability for designing, procuring, and operating AI data centers under these new conditions.

AI Workloads are Redefining Thermal Reality

AI infrastructure is driving a rapid and sustained increase in power density that traditional cooling assumptions can no longer accommodate.

Key indicators include:

- Rack power densities rising from 2–5 kW per rack a decade ago to 30–50+ kW today, with 100+ kW per rack already in sight

- AI‑optimized servers projected to represent nearly half of total data center power consumption by 2030

- Cooling consistently accounting for 40 percent or more of total facility energy use

- Global data center electricity demand expected to more than double by the end of the decade

At these densities, a reliable liquid cooling strategy is becoming more important than ever. It increases coupling between thermal, hydraulic, and control domains, and it raises the importance of understanding system behavior as a whole under fast and nonlinear load transients.

Liquid Cooling is a System Challenge, Not a Component One

Modern AI data centers behave less like collections of independent subsystems and more like tightly integrated machines. In liquid‑cooled environments especially, small local changes can propagate rapidly across the entire plant.

Key characteristics include:

- AI training loads can swing from near idle to peak power in seconds.

- Thermal, hydraulic, and control responses are tightly coupled across racks, CDUs, pumps, and chillers.

- Component cut sheets describe isolated behavior but do not explain system response.

For engineering managers and operators, this means that decisions based solely on rated performance or steady‑state assumptions carry increasing risk. Selecting hardware without understanding its behavior in the full cooling system increasingly amounts to making high-impact decisions without visibility into their downstream consequences. Understanding true behavior requires system‑level simulation.

Why Physics‑Based System Simulation Matters More Now

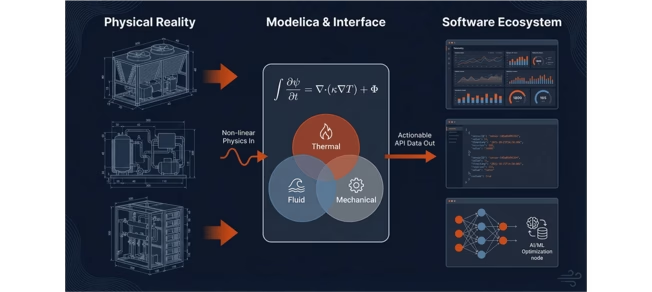

System‑level models for simulating and analyzing cooling systems can use:

- Modelica, an open, equation‑based, multi‑physics modeling language

- FMI (Functional Mock‑up Interface) for model exchange across tools and organizations

- API‑driven workflows that expose physical behavior to operational software, analytics platforms, and AI workflows

This approach allows thermal, fluid, mechanical, and control dynamics to be modeled together in a single coherent framework. The model becomes a shared digital asset that supports multiple teams and workflows rather than a one‑off analysis.

How does Simulation Provide Value Across the Data Center Lifecycle?

- Design: Quantifying Trade‑Offs in Liquid Cooling Architectures

System‑level simulation enables engineers to evaluate cooling topologies and equipment sizing decisions in the context of realistic AI workloads. Instead of optimizing for a single design point, teams can assess behavior across broad operating envelopes. Questions like these can be answered quantitatively:- How should CDUs, pumps, and heat exchangers be sized for transient AI loads?

- What are the trade‑offs between different liquid cooling architectures?

- How sensitive is overall efficiency to component‑level design choices?

- Procurement: From Cut Sheets to System Performance

Procurement decisions increasingly depend on understanding how equipment behaves inside a specific data center environment.

Simulation‑ready component models allow owner/operators to evaluate equipment based on system‑level metrics such as energy, water use, and transient response. Manufacturers can also demonstrate performance advantages that are invisible in datasheets.

For suppliers of valves, CDUs, pumps, and heat exchangers, providing simulation‑ready models is becoming a competitive differentiator rather than an academic exercise. - Operations: Managing Reality Beyond Design Conditions

Even well‑designed facilities face conditions that were not fully anticipated. Examples include extreme weather, evolving GPU generations, fouling, partial failures, and shifting load profiles.

The same system model developed during design can be reused to test control strategy changes, analyze failure modes, and assess capacity expansion scenarios without risking up-time. For operations teams, this enables informed decisions under uncertainty.

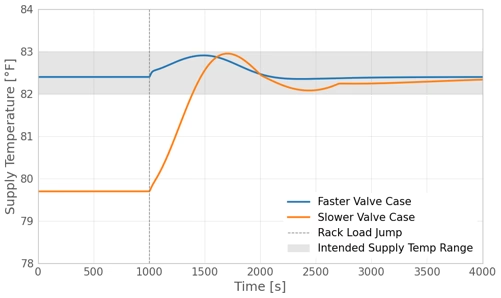

Case Study: One Valve, System‑Wide Consequences

This case examined how a single parameter change—the response time of a control valve in a liquid-cooled distribution loop—can create ripple effects across the broader cooling system.

Localized to the component, it was observed that under rapid AI load transient the faster valve delivers a steadier rack supply temperature compared to the slower one. When viewed at the system level, the model also revealed additional benefits to the chiller upstream of this component. For the slower responding valve, rack supply temperature excursion due to the load transient would have exceeded the design limit had it not been suppressed prior to the load jump. This however requires moving the upstream chiller from its efficient operating point.

Key Takeaways from Data Center Stakeholders

- Liquid‑cooled AI data centers are tightly coupled systems where local decisions have global impact.

- System‑level simulation is essential for understanding transient behavior and efficiency trade‑offs.

- Models deliver value across design, procurement, and operations.

- Simulation‑ready component models create transparency and trust between manufacturers and owner‑operators.

- Open standards such as Modelica and FMI enable collaboration across organizations and disciplines.

Final Thought on Liquid Cooling Simulation

As AI workloads continue to accelerate, cooling performance directly influences reliability, efficiency, and business outcomes. Liquid cooling in data centers raises the bar for both performance and complexity.

Physics‑based system simulation provides a practical way to navigate this complexity and make informed decisions before they become expensive or irreversible.

In the era of AI data centers, success depends not on optimizing components in isolation, but on understanding the system as a whole.

Explore Modelon’s Data Center System Simulation Solutions.