Engineering AI that Supports Real Decisions

Executive Summary

- Generic AI can explain engineering concepts, but it does not by itself produce trustworthy, decision-ready analysis.

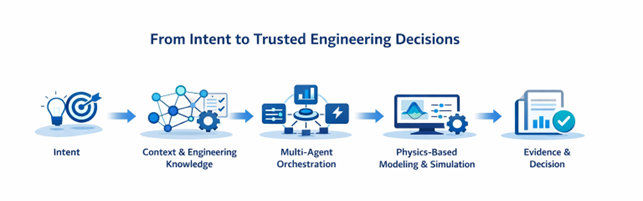

- Useful engineering AI must connect engineering intent, executable physics-based models, study setup, solver behavior, assumptions, and evidence.

- Modelon’s advantage is not only trusted models, but the engineering context that governs how those models should be used.

- The right workflow should set boundaries, evidence requirements, and approval points without hard-wiring every engineering step.

- This approach helps teams set up studies faster, diagnose failures more efficiently, compare alternatives more clearly, and reuse engineering knowledge over time.

- The long-term value is more repeatable, evidence-based engineering decisions across teams and programs.

Core Idea

In engineering, the workflow should govern the work, not hard-wire every step. The AI agent can propose and adapt the plan, but it should do so inside defined engineering constraints, evidence requirements, and approval points.

Engineering teams rarely struggle because they lack possible answers. They struggle because getting from a question to a trustworthy answer takes work: choosing the right model, deciding which assumptions are acceptable, configuring a study that can actually run, understanding why a run failed, and turning results into something that supports a real decision.

That is where much of engineering time goes. It is also where generic AI usually stops being useful. A fluent answer from an AI chat assistant may help with orientation, but it is not the same thing as a simulation-ready study, a stable run, or a recommendation an engineer would sign off on.

At Modelon, we see engineering AI as something more concrete: a way to connect engineering intent, trusted physics-based models, reusable engineering know-how, and governed execution so teams can move faster without giving up rigor.

Much of today’s AI in engineering improves individual steps in the work: faster modeling, better search, smoother collaboration, or more task-level automation. Modelon’s direction is broader. The aim is not only to optimize how engineering work is performed, but to improve the decision loop itself, from engineering intent, through physics-grounded execution, to validated recommendations and governed action.

Why Generic AI is Not Enough

A general-purpose AI system can summarize documentation, explain concepts, and draft recommendations. That is useful, and it can remove some friction from engineering work. But in systems simulation, that is not where most of the value is created.

The harder part starts when a team has to turn a question into a credible study. Which model should be used? Which assumptions are acceptable? Which scenarios matter? What should be constrained, and what should be explored? If the run fails, is the problem numerical, structural, or simply a bad setup? If the results look plausible, are they also physically credible?

These are not unusual exceptions. They are part of everyday physics-based engineering work. That is why useful engineering AI cannot stop at reading, summarizing, and generating text. It has to connect to the models, study setup, solver behavior, assumptions, and evidence that determine whether a result can actually be trusted.

Trusted Physics and Engineering Context as the Foundation

For more than 20 years, Modelon has built equation-based modeling technology and reusable Modelica libraries for complex physical systems. That foundation is critical because, in engineering, trust depends on whether a system can represent and predict physical behavior credibly, not on whether it can produce persuasive language.

When AI is connected to executable engineering assets, it can do more than talk about alternatives. It can help evaluate them. It can support scenario analysis, trade-off studies, and model-backed reasoning against systems that can actually be configured, run, and interpreted in ways engineers recognize as credible.

This is also where Modelon Impact matters. Modelon Impact is not just a surface for information. It is where libraries, model variants, study definitions, assumptions, and results become part of a working engineering process.

But trusted models alone are not enough. Good engineers do more than run simulations. They decide which simplifications are acceptable, which scenarios matter, what ranges are realistic, which reference architectures are relevant, and which results deserve skepticism.

Much of that know-how lives in study templates, reference configurations, domain assumptions, evaluation logic, review habits, and best practices that experienced teams apply almost instinctively. This is the reusable engineering context of the system: the layer that connects objectives to reference architectures, constraints, workflows, evaluators, and acceptable operating envelopes.

If AI is going to be useful, that knowledge has to be available in a form the system can work with. The advantage is not only having trusted models. It is having the engineering context that tells the system how those models should be used before the first run even starts.

Governed Agentic Workflows, Not Workflow-as-Implementation

This is where many current agent demos feel incomplete in an engineering context. They treat the workflow as the implementation itself: every step is predefined, every transition is explicit, and each agent call is hard-wired into the flow. That can be reliable, but it is also rigid.

Modelon’s direction is different. The workflow should act as governance, not as a script. It should define lifecycle stages, required artifacts, evidence expectations, and approval points. Within those boundaries, the agent can propose a plan, adapt the sequence of work, and respond to what it learns from actual engineering results.

This distinction matters. Defining a study, running it, diagnosing failures, refining a search, comparing alternatives, and preparing a recommendation for review are different kinds of work. They use different context, different tools, and different checks. A governed agentic approach lets the system separate those concerns without turning the entire process into one hard-coded script.

It also creates a clearer path for trust. The agent can be adaptive, but the engineering process remains governed. Required evidence stays visible. Approvals happen at the right moments. The result is not uncontrolled autonomy. It is intelligent execution inside explicit engineering boundaries.

Mental Model

Workflow = governance. Agent = plan + adaptive execution. Simulation, data processing, and external tools = the execution substrate.

What this Looks Like in Practice

From engineering intent to design exploration

Consider a team exploring alternative thermal or control architectures against a set of efficiency and operating constraints. In many organizations, a surprising amount of time still goes into choosing the right library components, selecting a reference architecture, defining realistic parameter ranges, and getting the study into a state that is actually simulation-ready.

In a governed agentic workflow, the lifecycle stage may simply say: define intent, propose a plan, execute the study, evaluate the evidence, and prepare a recommendation. Within that frame, the agent can decide whether it should start with a reference configuration in Modelon Impact, generate a DOE, narrow the parameter space, or refine the study after the first results come back. The workflow governs the boundaries; the agent adapts the path.

That produces more than speed. It improves the quality of setup. A better-defined study produces better comparisons, fewer wasted iterations, and a clearer path from engineering intent to decision-ready evidence.

From simulation failure to structured diagnosis

A large amount of expert time is consumed not by ideal workflows, but by runs that do not behave as expected. Sometimes the model fails to compile. Sometimes it compiles but will not initialize. Sometimes the solver becomes unstable after a parameter change. Sometimes a result is numerically valid but physically implausible.

Here, workflow-as-governance is especially useful. The stage may require diagnosis evidence before the process can move on, but it does not have to hard-code each troubleshooting step. The agent can inspect what changed since the last successful run, compare parameter ranges and solver settings, check whether the model is being used outside its intended envelope, and propose the next best action. If the confidence is low, the workflow can force escalation or human review.

That is a much more natural fit for engineering work than a rigid sequence of diagnostic steps. The issue is rarely that teams need a prettier explanation of the solver. They need a faster way to narrow the search space and converge on the real cause.

From model insight to operational decisions

The same pattern applies closer to operations. In data center cooling, for example, a team may want to evaluate alternative setpoint strategies before rollout. The goal is not just to optimize a variable in isolation. It is to understand how different choices move the trade-off between energy use, thermal headroom, and operational robustness under realistic conditions.

A governed workflow can define the decision stages: what evidence is required, what constraints cannot be violated, who needs to approve the recommendation, and what must be captured before a change is applied. Inside those rails, the agent can propose scenarios, decide whether more exploration is needed, execute simulations, compare alternatives, and assemble a recommendation package.

The output is not a black-box answer. It is a decision package with visible assumptions, explicit scenarios, and evidence tied back to model runs. That makes it easier both to act and to explain why a given option should move forward.

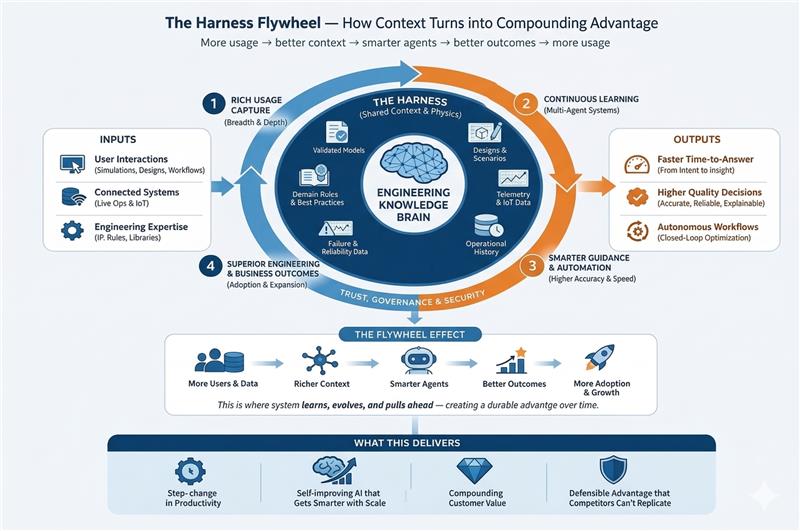

The Harness Flywheel

The immediate value of engineering AI is better support for real simulation work: faster study setup, clearer diagnosis when runs fail, and stronger decision support when alternatives need to be compared. But the longer-term value is bigger than that. In systems simulation, every serious workflow produces more than an answer. It produces context: assumptions that proved useful, scenarios that mattered, failure modes that repeated, and evidence that made a decision easier to trust. If that context is captured and reused, the system does not just help with today’s task. It becomes more useful with every meaningful study.

That is what we mean by the harness. The harness is the governed context around simulation-driven engineering work: validated models, reusable libraries, study definitions, assumptions, constraints, scenarios, solver behavior, operational history, and the evidence created as teams run studies and make decisions. It is the structure that connects engineering intent to execution and keeps the work reviewable, traceable, and reusable.

That is the Harness Flywheel: more usage creates richer engineering context; richer context enables better guidance and smarter automation; better outcomes increase trust and adoption; and greater adoption generates more high-value context in return. This is why Modelon’s opportunity is broader than task automation. The goal is not only to help engineers work faster in the moment. It is to build a simulation-driven system that gets better as it is used: better at framing studies, better at applying engineering context, better at recognizing familiar issues, and better at producing evidence-backed decisions.

What this Means for Customer Value

- Less manual setup before meaningful engineering work can begin.

- Faster movement from a question to a well-defined study in Modelon Impact.

- Fewer wasted cycles when simulations fail or produce suspicious results.

- Clearer review packages with visible assumptions, evidence, and trade-offs.

- More reusable engineering knowledge across studies, teams, and programs.

Over time, the value is not only faster execution of tasks. It is the Harness Flywheel: evidence accumulates, context gets richer, best practices become easier to use, and better decisions become more repeatable across studies, teams, and programs.

Modelon’s Approach

Modelon starts from the engineering side, not the chatbot side. The foundation is many years of physics-based modeling, reusable libraries, and simulation expertise. On top of that, we see an opportunity to build AI support that works with real engineering assets, real engineering context, and governed workflows rather than generic prompts alone.

Conclusion

Engineering organizations do not need another generic AI layer that sounds confident around technical language. They need a faster path from question to trustworthy analysis. That means better study setup, better reuse of engineering know-how, faster diagnosis when workflows fail, clearer comparison of alternatives, and a stronger link between model behavior, evidence, and the decisions that follow.